SK hynix Inc. Begins Mass Production of 192GB SOCAMM2 for AI Servers

Next-generation LPDDR5X module built on 1cnm process delivers over 2x bandwidth and 75% higher power efficiency, targeting AI and LLM workloads

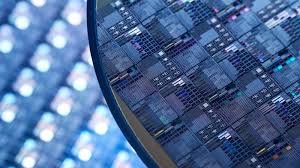

SK hynix Inc. (or "the company", www.skhynix.com) announced that it has begun mass production of the 192GB SOCAMM2, a next-generation memory module standard based on the 1cnm process (sixth-generation of the 10-nanometer technology) LPDDR5X low-power DRAM.

SOCAMM2[1] is a module that adapts low-power memory – previously used mainly in mobile products like smartphones - for server environments. It is designed to be a primary memory solution for next-generation AI servers.

SK hynix emphasised that the 1cnm-based SOCAMM2 product that is now in mass production delivers more than double the bandwidth with over 75% improved power efficiency compared to conventional RDIMM[2], providing an optimised solution for high-performance AI operations.

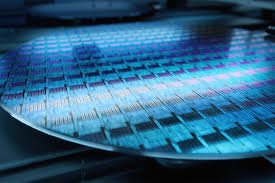

SK hynix expects the new SOCAMM2 product to fundamentally resolve the memory bottlenecks encountered during the training and inference of large language models (LLMs) with hundreds of billions of parameters, thereby playing a pivotal role in dramatically accelerating the overall system's processing speed.

The company stated that with the AI market shifting focus from inference to training, SOCAMM2 is gaining significant attention as a next-generation memory solution capable of operating LLMs with low power consumption. To meet the demands of its global Cloud Service Provider (CSP) customers, SK hynix has not only been providing a supply portfolio, but also stabilised its mass production system early on.

"By supplying the 192GB SOCAMM2, SK hynix has established a new standard for AI memory performance," Justin Kim, President & Head of AI Infra (CMO, Chief Marketing Officer) at SK hynix said. "We will solidify our position as the most trusted AI memory solution provider, through close collaboration with our global AI customers."